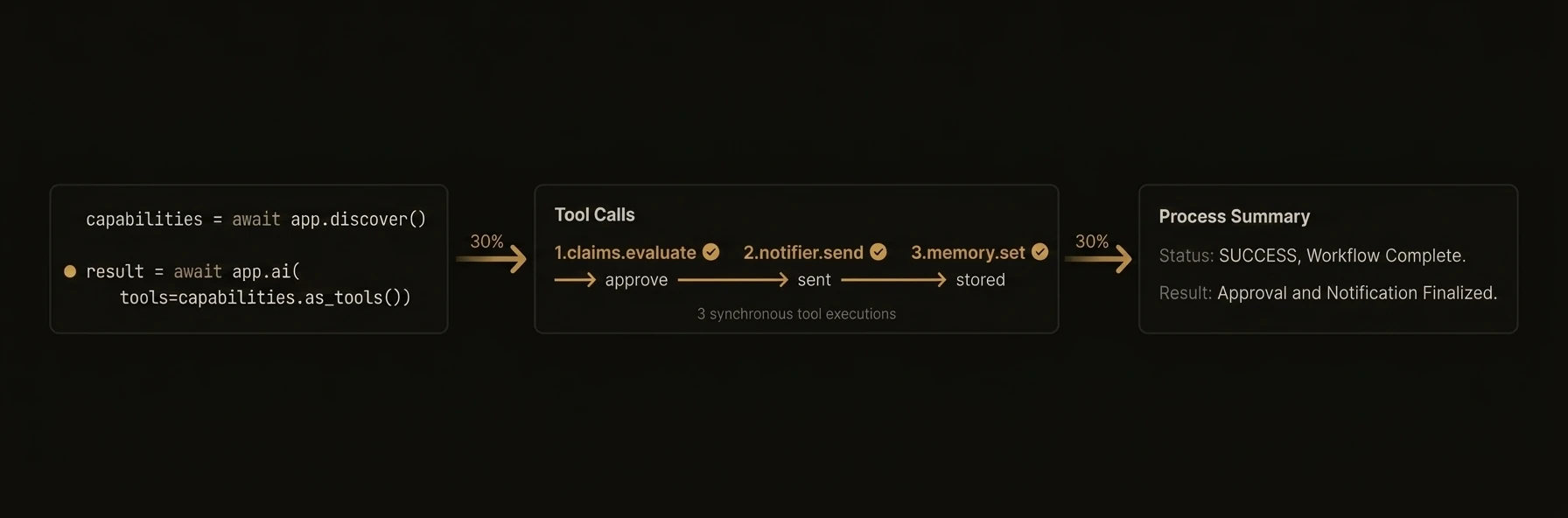

Tool Calling

AI auto-discovers and invokes other agents as tools through the control plane

AI automatically discovers other agents in the network and invokes them as tools to complete tasks. Pass tools="discover" and the SDK queries the control plane for all available agent capabilities, converts them to LLM-native tool schemas, and runs an automatic execution loop -- the LLM decides which agents to call, the SDK dispatches, feeds results back, and repeats until done.

from agentfield.tool_calling import ToolCallConfig

@app.reasoner()

async def plan_trip(destination: str, budget: float) -> dict:

# Auto-discover all agents in the network — LLM picks which to call

result = await app.ai(

system="You are a travel planner. Use available tools to build an itinerary.",

user=f"Plan a 5-day trip to {destination} under ${budget}",

tools="discover", # finds flight-search, hotel-booking, weather, etc.

)

# Full trace observability — see exactly what the AI did

print(f"{result.trace.total_turns} turns, {result.trace.total_tool_calls} tool calls")

for call in result.trace.calls:

status = "OK" if not call.error else f"ERR: {call.error}"

print(f" [{call.turn}] {call.tool_name} ({call.latency_ms:.0f}ms) -> {status}")

return {"itinerary": result.text}

# Filtered discovery — only expose specific capabilities by tag

result = await app.ai(

user="What's the weather in Tokyo and translate the forecast to Spanish?",

tools=ToolCallConfig(

tags=["weather", "translation"], # only matching agents visible to LLM

max_turns=5,

max_tool_calls=10,

),

)

# Lazy schema hydration — for large registries (100+ tools)

result = await app.ai(

user="Help me analyze this dataset.",

tools=ToolCallConfig(

schema_hydration="lazy", # send names+descriptions first

max_candidate_tools=50, # present at most 50 tools

max_hydrated_tools=10, # full schemas only for top 10 selected

),

)

# Combine tool calling with structured output

class TravelPlan(BaseModel):

destination: str

activities: list[str]

estimated_cost: float

plan = await app.ai(

user="Plan a weekend in Barcelona.",

tools="discover", # gather data via tools

schema=TravelPlan, # return validated schema

)

print(plan.estimated_cost) # 847.50 — typed floatagent.reasoner('planTrip', async (ctx) => {

// Auto-discover agents — LLM orchestrates them autonomously

const result = await ctx.aiWithTools(

`Plan a 5-day trip to ${ctx.input.destination} under $${ctx.input.budget}`,

{

system: 'You are a travel planner. Use available tools to build an itinerary.',

tools: 'discover',

}

);

// Full trace — see every tool call, latency, and errors

console.log(`${result.trace.totalTurns} turns, ${result.trace.totalToolCalls} calls`);

for (const call of result.trace.calls) {

console.log(` [${call.turn}] ${call.toolName} (${call.latencyMs}ms)`);

}

// Filtered discovery — only expose specific agent tags

const weather = await ctx.aiWithTools(

'What is the weather in Tokyo? Translate to Spanish.',

{

tools: { tags: ['weather', 'translation'], maxTurns: 5 },

}

);

// Lazy hydration for large registries

const analysis = await ctx.aiWithTools(

'Help me analyze this dataset.',

{

tools: {

schemaHydration: 'lazy', // names first, schemas on demand

maxCandidateTools: 50,

maxHydratedTools: 10,

},

}

);

return { itinerary: result.text, toolCalls: result.trace.totalToolCalls };

});package main

import (

"context"

"fmt"

"github.com/Agent-Field/agentfield/sdk/go/agent"

"github.com/Agent-Field/agentfield/sdk/go/ai"

)

func main() {

app, _ := agent.New(agent.Config{

NodeID: "travel-planner",

Version: "1.0.0",

AgentFieldURL: "http://localhost:8080",

AIConfig: ai.DefaultConfig(),

})

app.RegisterReasoner("planTrip", func(ctx context.Context, input map[string]any) (any, error) {

destination, _ := input["destination"].(string)

budget, _ := input["budget"].(float64)

prompt := fmt.Sprintf("Plan a 5-day trip to %s under $%.0f", destination, budget)

// Discover agents, convert to tool schemas, run the tool-call loop

resp, trace, err := app.AIWithTools(ctx, prompt, ai.ToolCallConfig{

MaxTurns: 10,

MaxToolCalls: 25,

})

if err != nil {

return nil, err

}

fmt.Printf("%d turns, %d tool calls\n", trace.TotalTurns, trace.TotalToolCalls)

for _, call := range trace.Calls {

fmt.Printf(" [%d] %s (%.0fms)\n", call.Turn, call.ToolName, call.LatencyMs)

}

return map[string]any{

"itinerary": resp.Choices[0].Message.Content[0].Text,

}, nil

})

app.Serve(context.Background())

}What just happened

The SDK discovered callable capabilities, converted them into tool definitions for the model, executed the chosen agent calls, and returned both the final answer and the trace metadata. That means the visible example is not just tool use, it is tool use with bounded turns, bounded calls, and replayable execution details.

{

"total_turns": 3,

"total_tool_calls": 2,

"calls": [

{ "turn": 1, "tool": "flights.search", "latency_ms": 184 },

{ "turn": 2, "tool": "hotels.search", "latency_ms": 231 }

]

}