Intelligence

Media Generation

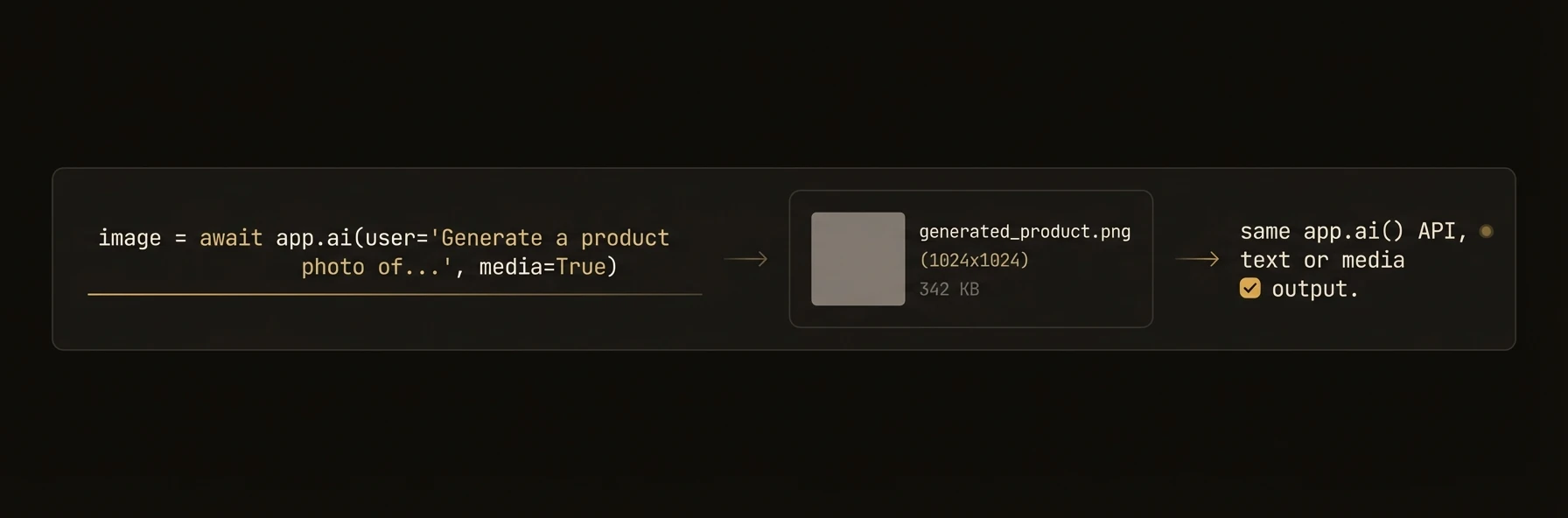

Generate images, video, audio, and music with a unified API backed by pluggable media providers across Python, TypeScript, and Go.

Generate images, audio, video, and music from your agents — same method pattern across Python, TypeScript, and Go.

Agents that generate reports, product listings, marketing content, or customer-facing assets often need more than text. AgentField's media generation API lets you create images, narrate text, produce video, generate music, and transcribe audio through a unified interface backed by pluggable providers like fal.ai, OpenRouter, DALL-E, and ElevenLabs.

from agentfield import Agent, AIConfig

app = Agent(

node_id="product-listing-generator",

ai_config=AIConfig(

fal_api_key="your-fal-key", # or FAL_KEY env var

openrouter_api_key="your-or-key", # or OPENROUTER_API_KEY env var

),

)

@app.reasoner()

async def generate_product_listing(product: dict) -> dict:

# Generate a product image via OpenRouter (Gemini)

image = await app.ai_generate_image(

prompt=f"Professional product photo: {product['name']}",

model="openrouter/google/gemini-2.5-flash-image-preview",

)

image.images[0].save(f"/output/{product['id']}_hero.png")

# Generate a short product demo video via OpenRouter

video = await app.ai_generate_video(

prompt=f"Product demonstration: {product['name']} in use",

model="openrouter/kling-video/v2.0/master",

duration=10,

)

if video.videos:

video.videos[0].save(f"/output/{product['id']}_demo.mp4")

# Generate audio narration

audio = await app.ai_generate_audio(

text=f"Introducing {product['name']}: {product['description']}",

model="openrouter/openai/tts-1",

voice="alloy",

)

if audio.audio:

audio.audio.save(f"/output/{product['id']}_narration.wav")

# Generate background music

music = await app.ai_generate_music(

prompt="Upbeat corporate background music for product video",

model="openrouter/google/lyria-3-pro",

duration=30,

)

if music.audio:

music.audio.save(f"/output/{product['id']}_bgm.wav")

return {

"image_url": image.images[0].url if image.images else None,

"video_url": video.videos[0].url if video.videos else None,

"audio_url": audio.audio.url if audio.audio else None,

}import { Agent } from "@agentfield/sdk";

import { OpenRouterMediaProvider, MediaRouter } from "@agentfield/sdk";

const agent = new Agent({ nodeId: "media-agent" });

// Set up media provider

const media = new OpenRouterMediaProvider(); // reads OPENROUTER_API_KEY env var

agent.reasoner("generateAssets", async (ctx) => {

// Generate product image

const image = await media.generateImage({

prompt: `Professional product photo: ${ctx.input.name}`,

model: "google/gemini-2.5-flash-image-preview",

});

console.log(`Generated ${image.images.length} image(s)`);

// Generate product demo video (async polling)

const video = await media.generateVideo({

prompt: `Product demo: ${ctx.input.name} in use`,

model: "kling-video/v2.0/master",

duration: 10,

timeout: 600_000,

});

console.log(`Video: ${video.videos?.[0]?.url}`);

// Generate audio narration (SSE streaming)

const audio = await media.generateAudio({

text: `Introducing ${ctx.input.name}`,

model: "openai/tts-1",

voice: "alloy",

});

return {

imageUrl: image.images[0]?.url,

videoUrl: video.videos?.[0]?.url,

hasAudio: !!audio.audio,

};

});

agent.serve();package main

import (

"context"

"fmt"

"log"

"os"

"github.com/Agent-Field/agentfield/sdk/go/agent"

goai "github.com/Agent-Field/agentfield/sdk/go/ai"

)

func main() {

app, _ := agent.New(agent.Config{

NodeID: "media-agent",

Version: "1.0.0",

})

// Set up media provider

media, err := goai.NewOpenRouterMediaProvider(os.Getenv("OPENROUTER_API_KEY"))

if err != nil {

log.Fatal(err)

}

app.RegisterReasoner("generateAssets", func(ctx context.Context, input map[string]any) (any, error) {

name, _ := input["name"].(string)

// Generate product image

image, err := media.GenerateImage(ctx, goai.ImageRequest{

Prompt: fmt.Sprintf("Professional product photo: %s", name),

Model: "google/gemini-2.5-flash-image-preview",

})

if err != nil {

return nil, err

}

// Generate demo video (async polling)

video, err := media.GenerateVideo(ctx, goai.VideoRequest{

Prompt: fmt.Sprintf("Product demo: %s in use", name),

Model: "kling-video/v2.0/master",

Duration: 10,

})

if err != nil {

return nil, err

}

// Generate audio narration (SSE streaming)

audio, err := media.GenerateAudio(ctx, goai.AudioRequest{

Text: fmt.Sprintf("Introducing %s", name),

Model: "openai/tts-1",

Voice: "alloy",

})

if err != nil {

return nil, err

}

return map[string]any{

"images": len(image.Images),

"video": len(video.Videos) > 0,

"audio": audio.Audio != nil,

}, nil

})

app.Serve(context.Background())

}What you get back

Every SDK returns typed response objects with saveable files and URLs — not raw provider responses you have to normalize yourself.

{

"image_url": "https://cdn.example.com/product_hero.webp",

"video_url": "https://cdn.example.com/product_demo.mp4",

"audio_url": "https://cdn.example.com/product_narration.wav",

"music_url": "https://cdn.example.com/background_music.wav"

}