Building Blocks

Reasoners

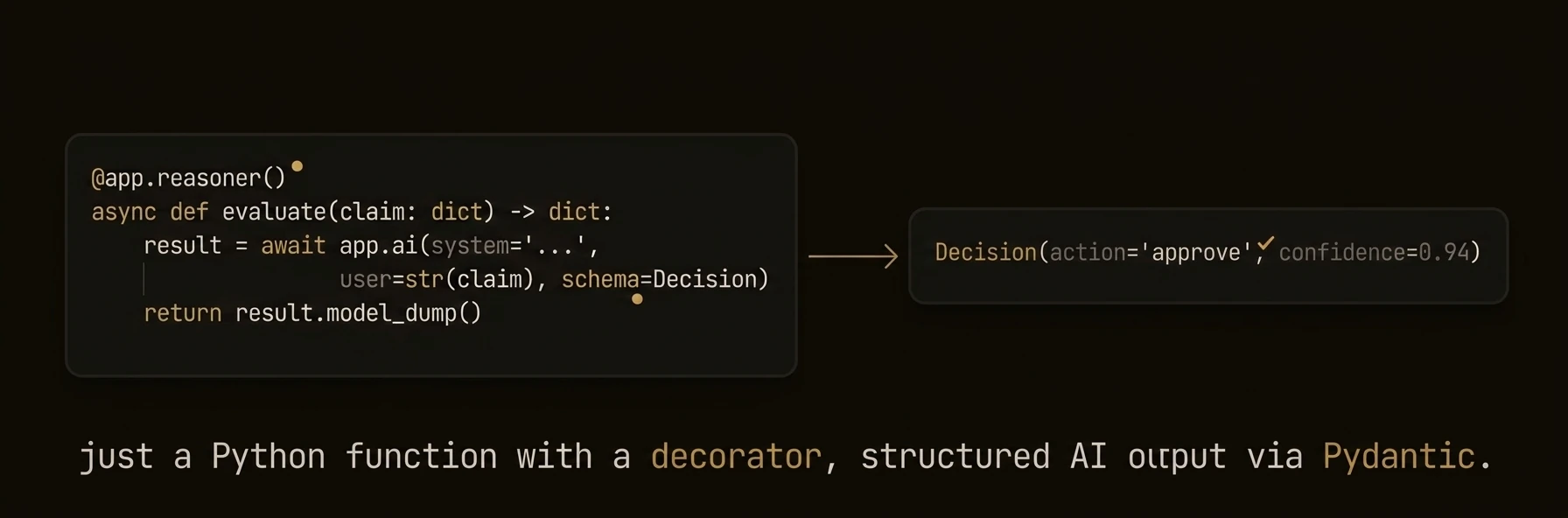

AI-powered functions with automatic workflow tracking, schema generation, and execution context

AI-powered functions that turn LLM calls into typed, tracked, auditable API endpoints.

The real production problem is not “how do I call a model?” It is “which parts of this workflow need AI judgment, and which parts need deterministic control?” Reasoners are where that boundary lives.

They are not just LLM wrappers. A reasoner combines AI analysis with your routing, validation, escalation, and side effects, then runs that workflow with full execution context and auditability.

from agentfield import Agent, AIConfig

from pydantic import BaseModel

app = Agent(

node_id="support-triage",

ai_config=AIConfig(model="anthropic/claude-sonnet-4-20250514"),

)

class TriageDecision(BaseModel):

priority: str

team: str

escalate: bool

reasoning: str

@app.reasoner(tags=["support", "triage"])

async def triage_ticket(subject: str, body: str, account_tier: str) -> dict:

decision = await app.ai(

system="You triage support tickets for urgency, routing, and escalation risk.",

user=f"Tier: {account_tier}\nSubject: {subject}\n\n{body}",

schema=TriageDecision,

)

if decision.escalate:

await app.call("escalation.create_case",

subject=subject, priority=decision.priority, reasoning=decision.reasoning)

app.note(

f"Triage decision priority={decision.priority} team={decision.team}",

["triage", decision.priority],

)

return decision.model_dump()import { Agent } from '@agentfield/sdk';

import { z } from 'zod';

const agent = new Agent({

nodeId: 'support-triage',

aiConfig: { provider: 'anthropic', model: 'claude-sonnet-4-20250514' },

});

agent.reasoner('triageTicket', async (ctx) => {

const { subject, body, accountTier } = ctx.input;

const decision = await ctx.ai(

`Tier: ${accountTier}\nSubject: ${subject}\n\n${body}`,

{

system: 'You triage support tickets for urgency, routing, and escalation risk.',

schema: z.object({

priority: z.string(),

team: z.string(),

escalate: z.boolean(),

reasoning: z.string(),

}),

},

);

if (decision.escalate) {

await ctx.call('escalation.createCase', {

subject,

priority: decision.priority,

reasoning: decision.reasoning,

});

}

ctx.note(`Triage decision priority=${decision.priority} team=${decision.team}`, [

'triage',

decision.priority,

]);

return decision;

}, {

tags: ['support', 'triage'],

description: 'Classify and route a support ticket with structured output',

});a.RegisterReasoner("triage_ticket", func(ctx context.Context, input map[string]any) (any, error) {

subject, _ := input["subject"].(string)

body, _ := input["body"].(string)

tier, _ := input["account_tier"].(string)

result, err := a.AI(ctx, fmt.Sprintf("Tier: %s\nSubject: %s\n\n%s", tier, subject, body),

ai.WithSystem("You triage support tickets for urgency, routing, and escalation risk."),

)

if err != nil {

return nil, err

}

return result, nil

},

agent.WithDescription("Classify and route a support ticket with structured output"),

agent.WithReasonerTags("support", "triage"),

)What just happened

- AI produced a typed triage decision instead of free-form text

- Your code handled the escalation side effect deterministically

- The reasoner emitted an execution note for observability

- AgentField attached execution IDs, workflow context, and an HTTP target automatically

Example target and result shape:

{

"target": "support-triage.triage_ticket",

"execution_id": "exec_a1b2c3",

"result": {

"priority": "critical",

"team": "support-engineering",

"escalate": true,

"reasoning": "Enterprise customer reporting data loss"

}

}