Intelligence

AI Generation

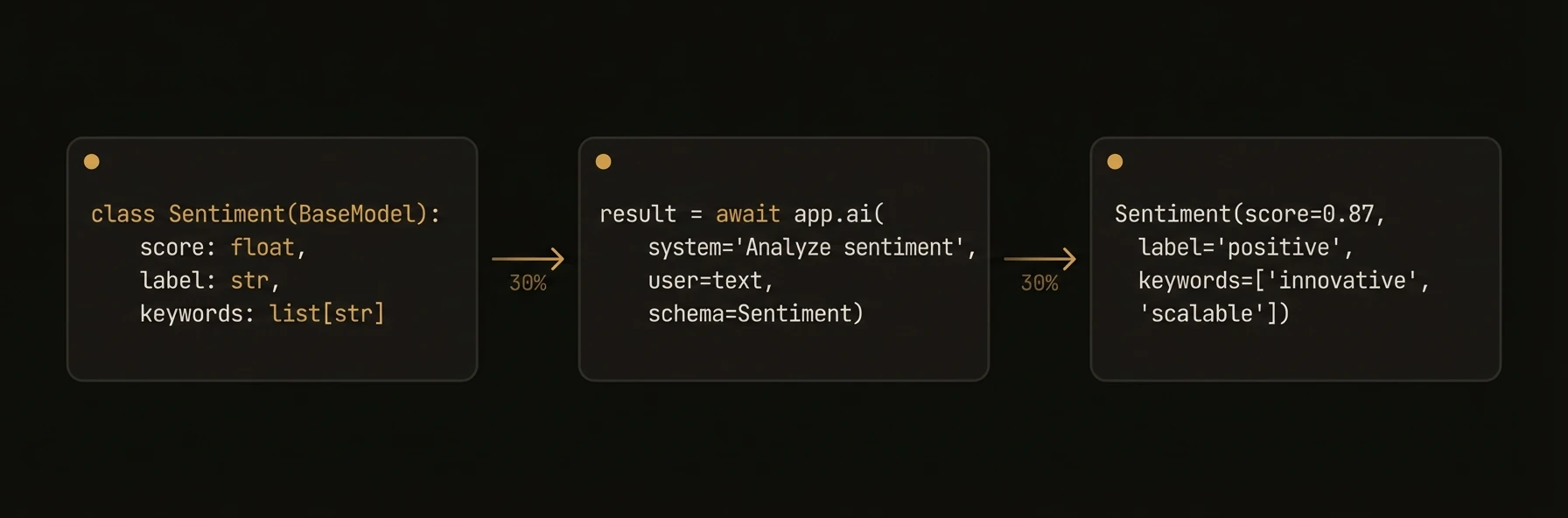

Text generation and structured output with app.ai() — the universal method for LLM calls

Call any LLM with a single method. Pass a prompt, get text back. Pass a schema, get a validated object back. Override the model, temperature, or any other parameter per-call without changing your agent config. Works with 100+ models via LiteLLM (Python), Vercel AI SDK (TypeScript), or OpenAI-compatible APIs (Go).

from pydantic import BaseModel

class LeadScore(BaseModel):

score: float # 0-100 likelihood to convert

reasoning: str # why this score

next_action: str # recommended sales action

urgency: str # "high" | "medium" | "low"

# Structured output — pass a schema, get a validated typed object back

lead = await app.ai(

system="You are a B2B sales analyst scoring inbound leads.",

user="Company: Acme Corp, 500 employees, viewed pricing page 3x this week",

schema=LeadScore,

)

print(lead.score) # 82.5 — not a string, a float

print(lead.next_action) # "Schedule demo within 24 hours"

# Override model per-call — cheap model for fast classification

category = await app.ai(

user=ticket_text,

schema=TicketCategory,

model="openai/gpt-4o-mini", # 10x cheaper, good enough for routing

)

# Powerful model for deep analysis — same method, different model

analysis = await app.ai(

system="Provide root cause analysis with remediation steps.",

user=ticket_text,

schema=RootCauseAnalysis,

model="anthropic/claude-sonnet-4-20250514", # best reasoning for hard problems

)

# Streaming — real-time token delivery for interactive UIs

response = await app.ai(

system="Write a detailed post-mortem.",

user=incident_summary,

stream=True,

)

async for chunk in response:

print(chunk.choices[0].delta.content, end="")

# Automatic fallback chain — if primary model fails, try the next

app = Agent(

node_id="resilient-agent",

ai_config=AIConfig(

model="anthropic/claude-sonnet-4-20250514",

fallback_models=["openai/gpt-4o", "deepseek/deepseek-chat"],

max_cost_per_call=0.05, # hard cost cap per call

),

)import { z } from 'zod';

const LeadScore = z.object({

score: z.number(), // 0-100 likelihood to convert

reasoning: z.string(), // why this score

nextAction: z.string(), // recommended sales action

urgency: z.enum(['high', 'medium', 'low']),

});

agent.reasoner('scoreInboundLead', async (ctx) => {

// Structured output — Zod schema in, validated typed object out

const lead = await ctx.ai(ctx.input.leadDescription, {

system: 'You are a B2B sales analyst scoring inbound leads.',

schema: LeadScore,

});

console.log(lead.score); // 82.5 — typed number, not string

// Override model per-call — cheap for routing, powerful for analysis

const category = await ctx.ai(ticketText, {

schema: TicketCategory,

model: 'gpt-4o-mini', // fast + cheap for classification

});

const analysis = await ctx.ai(ticketText, {

system: 'Provide root cause analysis with remediation steps.',

schema: RootCauseAnalysis,

model: 'claude-sonnet-4-20250514', // deep reasoning

});

// Streaming — real-time token delivery

const stream = await ctx.aiStream('Write a post-mortem.', {

system: 'You are an SRE writing an incident report.',

});

for await (const chunk of stream) {

process.stdout.write(chunk);

}

return lead;

});type LeadScore struct {

Score float64 `json:"score"` // 0-100 likelihood to convert

Reasoning string `json:"reasoning"` // why this score

NextAction string `json:"next_action"` // recommended sales action

Urgency string `json:"urgency"` // high | medium | low

}

// Structured output — Go struct in, validated JSON out

resp, _ := client.Complete(ctx,

"Company: Acme Corp, 500 employees, viewed pricing page 3x",

ai.WithSystem("You are a B2B sales analyst scoring inbound leads."),

ai.WithSchema(LeadScore{}),

)

var lead LeadScore

resp.JSON(&lead)

fmt.Printf("Score: %.1f, Action: %s\n", lead.Score, lead.NextAction)

// Override model per-call — cheap for classification

catResp, _ := client.Complete(ctx, ticketText,

ai.WithModel("gpt-4o-mini"), // fast routing

ai.WithSchema(TicketCategory{}),

)

// Powerful model for hard problems

analysisResp, _ := client.Complete(ctx, ticketText,

ai.WithModel("anthropic/claude-sonnet-4-20250514"),

ai.WithSystem("Provide root cause analysis."),

ai.WithSchema(RootCauseAnalysis{}),

ai.WithTemperature(0.0), // deterministic for analysis

)What just happened

The same ai() call handled three common production modes without changing your surrounding code: typed extraction with a schema, a cheap model override for simple classification, and a stronger model override for deeper analysis. The page’s main point is that you do not need separate client stacks for each of those paths.

{

"lead_score": {

"score": 8.7,

"next_action": "schedule_demo"

},

"ticket_category": {

"category": "billing"

},

"analysis": {

"root_cause": "misconfigured webhook retry policy"

}

}