Intelligence

Models

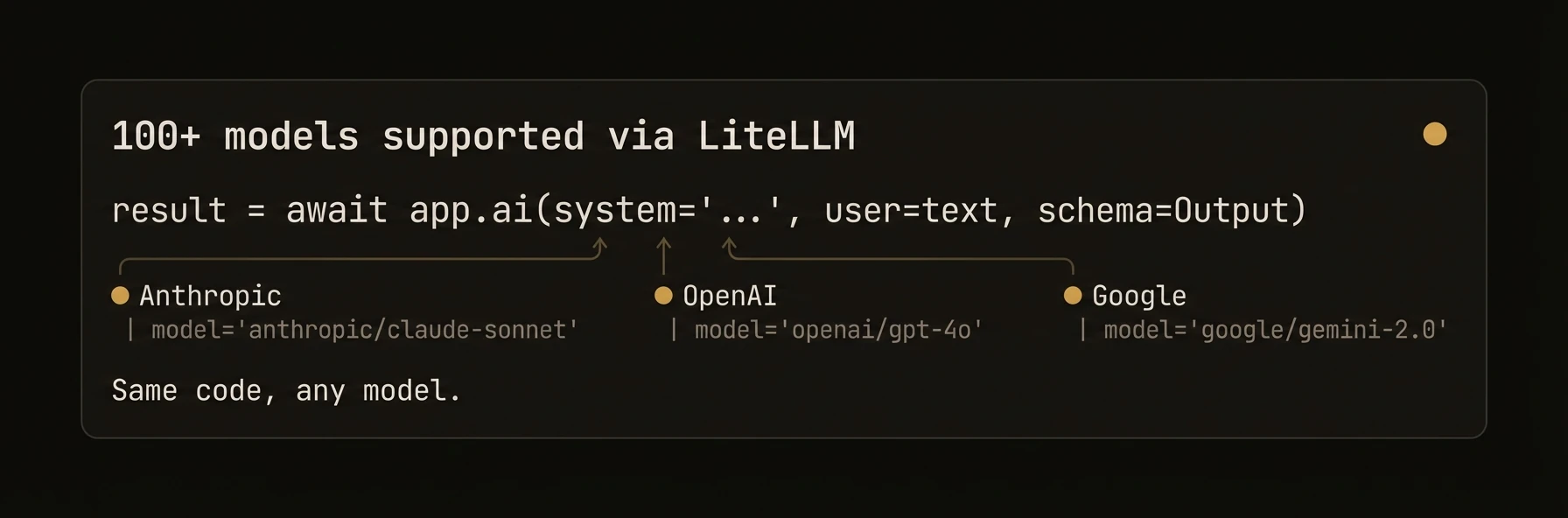

100+ LLMs via LiteLLM — model-agnostic configuration, cost controls, and rate limiting

Use any LLM. Switch models per-call. Set cost caps. Auto-retry on rate limits. AgentField is model-agnostic -- the Python SDK routes through LiteLLM for 100+ models from every major provider, TypeScript uses the Vercel AI SDK, and Go uses OpenAI-compatible HTTP APIs directly.

from agentfield import Agent, AIConfig, HarnessConfig

# Agent-level config — defaults for all .ai() calls

app = Agent(

node_id="production-agent",

ai_config=AIConfig(

model="anthropic/claude-sonnet-4-20250514", # default model

fallback_models=[ # auto-failover chain

"openai/gpt-4o",

"deepseek/deepseek-chat",

],

max_cost_per_call=0.05, # hard cap per call

daily_budget=10.00, # daily spend limit

enable_rate_limit_retry=True, # auto-retry with backoff

rate_limit_max_retries=10,

auto_inject_memory=["user_prefs", "conversation"], # inject memory into prompts

),

# Harness uses a DIFFERENT model config — coding agents have their own budget

harness_config=HarnessConfig(

provider="claude-code",

model="sonnet",

max_budget_usd=2.00, # separate cost cap for coding tasks

max_turns=30,

),

)

# Per-call model override — no agent reconfiguration needed

category = await app.ai(

user=ticket_text,

schema=TicketCategory,

model="openai/gpt-4o-mini", # cheap model for fast classification

)

analysis = await app.ai(

user=ticket_text,

schema=DeepAnalysis,

model="anthropic/claude-sonnet-4-20250514", # powerful model for reasoning

temperature=0.0, # deterministic

)

# Local models — zero data leaves your machine

local_app = Agent(

node_id="local-agent",

ai_config=AIConfig(

model="ollama/llama3",

api_base="http://localhost:11434",

),

)import { Agent } from '@agentfield/sdk';

const agent = new Agent({

nodeId: 'production-agent',

aiConfig: {

provider: 'anthropic',

model: 'claude-sonnet-4-20250514', // default model

temperature: 0.3,

maxTokens: 4096,

enableRateLimitRetry: true, // auto-retry with backoff

rateLimitMaxRetries: 20,

},

});

agent.reasoner('analyze', async (ctx) => {

// Per-call model override — cheap for routing, powerful for analysis

const category = await ctx.ai(ticketText, {

model: 'gpt-4o-mini', // fast + cheap

schema: TicketCategory,

});

const analysis = await ctx.ai(ticketText, {

model: 'claude-sonnet-4-20250514', // deep reasoning

schema: DeepAnalysis,

temperature: 0.0,

});

// Harness config — different model for coding tasks

const fix = await agent.harness('Fix the failing test in auth.test.ts', {

provider: 'codex',

model: 'o4-mini',

maxBudgetUsd: 1.00,

});

return { category, analysis };

});// Agent-level config

config := &ai.Config{

APIKey: os.Getenv("OPENROUTER_API_KEY"),

BaseURL: "https://openrouter.ai/api/v1",

Model: "anthropic/claude-sonnet-4-20250514", // default model

}

client, _ := ai.NewClient(config)

// Per-call model override

catResp, _ := client.Complete(ctx, ticketText,

ai.WithModel("gpt-4o-mini"), // cheap for classification

ai.WithSchema(TicketCategory{}),

)

analysisResp, _ := client.Complete(ctx, ticketText,

ai.WithModel("anthropic/claude-sonnet-4-20250514"), // powerful for analysis

ai.WithTemperature(0.0),

ai.WithSchema(DeepAnalysis{}),

)| Provider | Python (LiteLLM) | TypeScript (Vercel AI) | Go (HTTP) |

|---|---|---|---|

| OpenAI | openai/gpt-4o | openai provider | Default |

| Anthropic | anthropic/claude-sonnet-4-20250514 | anthropic provider | Via OpenRouter |

| Google Gemini | gemini/gemini-2.5-pro | google provider | Via OpenRouter |

| Mistral | mistral/mistral-large-latest | mistral provider | Via OpenRouter |

| DeepSeek | deepseek/deepseek-chat | deepseek provider | Via OpenRouter |

| Groq | groq/llama-3.1-70b | groq provider | Via OpenRouter |

| xAI | xai/grok-2 | xai provider | Via OpenRouter |

| Cohere | cohere/command-r-plus | cohere provider | Via OpenRouter |

| OpenRouter | openrouter/... | openrouter provider | Native |

| Ollama | ollama/llama3 | ollama provider | Native |

| Azure OpenAI | azure/gpt-4o | Via OpenAI adapter | Via URL |

| AWS Bedrock | bedrock/... | N/A | N/A |

What just happened

The example set a default model once and then overrode it only where the task changed. That is the practical pattern this page should teach: keep one baseline model for most calls, then switch to a cheaper or stronger model per execution instead of rebuilding your agent around provider-specific clients.

{

"default_model": "gpt-4o",

"classification_override": "gpt-4o-mini",

"analysis_override": "anthropic/claude-sonnet-4-20250514"

}