Testing

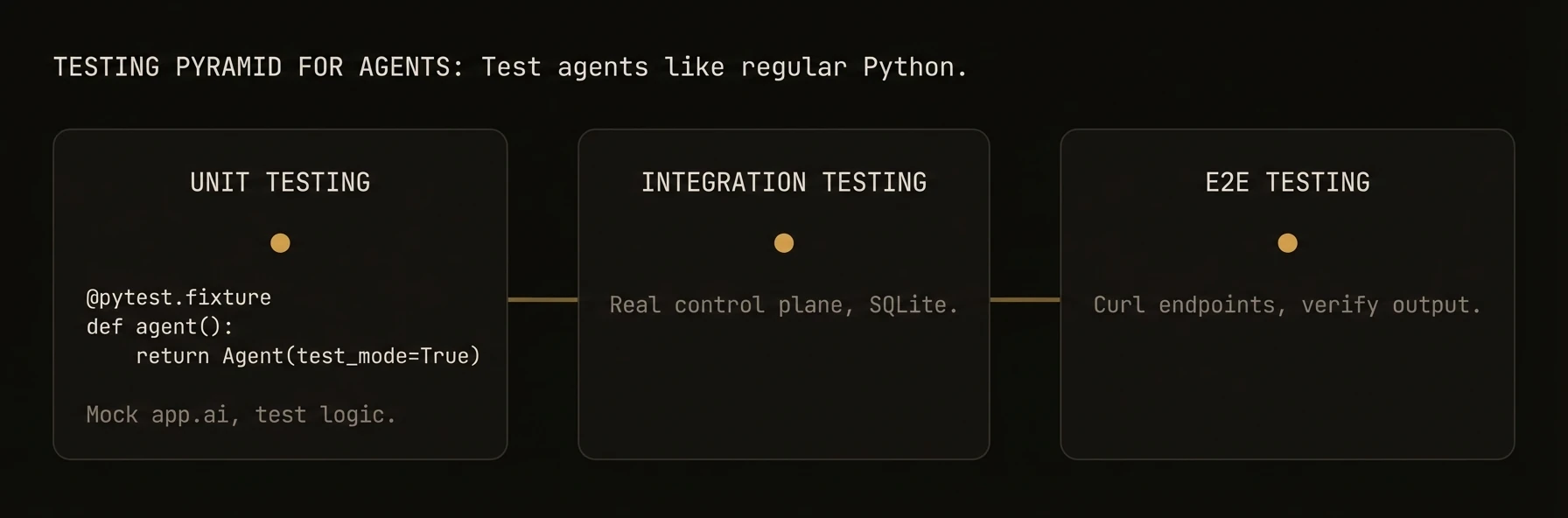

Patterns for unit testing, integration testing, and mocking AgentField agents.

Write deterministic, fast tests for your agents — from unit tests to full integration suites.

AgentField agents are regular Python functions decorated with @app.reasoner() and @app.skill(). This means you can test them with standard tools like pytest, mock LLM calls for deterministic assertions, and run integration tests against a local backend.

Unit Testing Reasoners

Reasoner functions receive an app context and input. To test them in isolation, create a test agent with mocked dependencies.

# src/agents/classifier.py

from agentfield import Agent, AIConfig

from pydantic import BaseModel

app = Agent(

node_id="classifier",

ai_config=AIConfig(model="anthropic/claude-sonnet-4-20250514"),

)

class Classification(BaseModel):

category: str

confidence: float

@app.reasoner()

async def classify(text: str) -> Classification:

result = await app.ai(

system="Classify the following text into a category.",

user=text,

schema=Classification,

)

return result# tests/test_classifier.py

import pytest

from unittest.mock import AsyncMock, patch

from src.agents.classifier import app, classify, Classification

@pytest.mark.asyncio

async def test_classify_returns_valid_category():

app.ai = AsyncMock(return_value=Classification(

category="technical",

confidence=0.95,

))

result = await classify(text="How to configure Kubernetes pods")

assert result.category == "technical"

assert result.confidence >= 0.9

app.ai.assert_called_once()The key pattern: replace app.ai (and app.call) with AsyncMock instances so no real LLM calls happen. Your test runs in milliseconds and produces deterministic results.

What just happened

The test exercised the reasoner directly while replacing the model call with a mock. That keeps the feedback loop fast and makes the assertion about your logic, not about whether an external provider responded the same way twice.

{

"execution_mode": "local_test",

"llm_dependency": "mocked",

"focus": "reasoner_logic"

}Mocking AI Calls

app.ai() is the most common call to mock. Control exactly what the LLM "returns" so you can test downstream logic.

# tests/test_intake.py

import pytest

from unittest.mock import AsyncMock

from pydantic import BaseModel

class IntakeResult(BaseModel):

contract_type: str

parties: list[str]

confident: bool

@pytest.mark.asyncio

async def test_intake_confident_path():

"""When .ai() is confident, skip the fallback."""

from src.agents.intake import app, process_intake

app.ai = AsyncMock(return_value=IntakeResult(

contract_type="NDA",

parties=["Acme Corp", "Globex Inc"],

confident=True,

))

app.call = AsyncMock()

result = await process_intake(document="...")

assert result.contract_type == "NDA"

# Verify the fallback was NOT called

app.call.assert_not_called()

@pytest.mark.asyncio

async def test_intake_fallback_when_not_confident():

"""When .ai() returns confident=False, escalate via cross-agent call."""

from src.agents.intake import app, process_intake

app.ai = AsyncMock(return_value=IntakeResult(

contract_type="unknown",

parties=[],

confident=False,

))

app.call = AsyncMock(return_value={

"contract_type": "MSA",

"parties": ["Acme Corp", "Globex Inc"],

"confident": True,

})

result = await process_intake(document="...")

assert result.contract_type == "MSA"

app.call.assert_called_once()Testing Multiple AI Calls in Sequence

When a reasoner makes several app.ai() calls, use side_effect to return different values for each call:

@pytest.mark.asyncio

async def test_multi_step_reasoning():

from src.agents.analyzer import app, analyze_document

app.ai = AsyncMock(side_effect=[

Classification(category="legal", confidence=0.8),

RiskScore(score=7, explanation="High liability exposure"),

])

result = await analyze_document(text="...")

assert app.ai.call_count == 2

assert result.risk_score == 7Mocking Cross-Agent Calls

app.call() invokes other agents over the network. Mock it to test your agent without starting dependent services.

# tests/test_orchestrator.py

import pytest

from unittest.mock import AsyncMock

@pytest.mark.asyncio

async def test_orchestrator_aggregates_results():

from src.agents.orchestrator import app, run_analysis

# Mock responses from two downstream agents

app.call = AsyncMock(side_effect=[

{"findings": ["Issue A"], "severity": "high"},

{"findings": ["Issue B"], "severity": "low"},

])

result = await run_analysis(document_id="doc-123")

assert len(result.findings) == 2

assert result.highest_severity == "high"

# Verify correct agents were called

calls = app.call.call_args_list

assert calls[0].args[0] == "security-analyst.analyze"

assert calls[1].args[0] == "compliance-analyst.analyze"Testing Error Handling

Verify your agent handles downstream failures gracefully:

@pytest.mark.asyncio

async def test_handles_downstream_agent_failure():

from src.agents.orchestrator import app, run_analysis

app.call = AsyncMock(side_effect=ConnectionError("Agent unavailable"))

result = await run_analysis(document_id="doc-123")

assert result.status == "partial_failure"

assert "Agent unavailable" in result.error_messageIntegration Testing

For integration tests, run your agent against a real local backend using SQLite. This tests the full stack — routing, serialization, memory — without external dependencies.

Setup a Test Agent with SQLite

# tests/conftest.py

import pytest

from agentfield import Agent, AIConfig

@pytest.fixture

async def test_agent():

"""Create a test agent for local tests."""

agent = Agent(

node_id="test-agent",

ai_config=AIConfig(model="anthropic/claude-sonnet-4-20250514"),

)

yield agentTesting Memory Operations

# tests/test_memory_integration.py

import pytest

@pytest.mark.asyncio

async def test_memory_roundtrip(test_agent):

await test_agent.memory.set("intake-status", {"step": "intake", "status": "done"})

result = await test_agent.memory.get("intake-status")

assert result["step"] == "intake"

assert result["status"] == "done"

@pytest.mark.asyncio

async def test_memory_default_value(test_agent):

"""get() returns the default when the key does not exist."""

result = await test_agent.memory.get("nonexistent-key", default=None)

assert result is NoneTesting with the CLI

Run the control plane and your agent app locally, then hit the execution API with HTTP requests. This is useful for manual testing and debugging.

Start the Local Server

af server

python src/agents/classifier.pyThis starts the control plane on http://localhost:8080 and registers your agent with it.

Send Test Requests

# Call a reasoner

curl -X POST http://localhost:8080/api/v1/execute/classifier.classify \

-H "Content-Type: application/json" \

-d '{"input": {"text": "How to configure Kubernetes pods"}}'

# Check agent health

curl http://localhost:8080/api/v1/health

# List registered nodes

curl http://localhost:8080/api/ui/v1/nodesRun the Control Plane with Verbose Logging

af server --verboseCI/CD Patterns

Run your agent tests in CI with predictable results. The key: mock all LLM calls so tests are fast, free, and deterministic.

Project Structure

my-agent/

src/

agents/

classifier.py

orchestrator.py

tests/

conftest.py

test_classifier.py

test_orchestrator.py

test_integration.py

pyproject.tomlpyproject.toml Test Configuration

[tool.pytest.ini_options]

asyncio_mode = "auto"

testpaths = ["tests"]

markers = [

"integration: marks tests that use a real backend (deselect with '-m \"not integration\"')",

"slow: marks tests that take > 5s",

]

[project.optional-dependencies]

test = [

"pytest>=8.0",

"pytest-asyncio>=0.23",

"pytest-cov>=5.0",

]GitHub Actions Workflow

# .github/workflows/test.yml

name: Agent Tests

on: [push, pull_request]

jobs:

test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- name: Install dependencies

run: pip install -e ".[test]"

- name: Run unit tests

run: pytest -m "not integration" --cov=src --cov-report=term-missing

- name: Run integration tests

run: pytest -m integration

- name: Check coverage

run: pytest --cov=src --cov-fail-under=80Environment Variables for CI

# .env.test — loaded in CI, never committed

AGENTFIELD_LOG_LEVEL=warning

# If you need real LLM calls in integration tests (rare):

# ANTHROPIC_API_KEY=sk-ant-...Tips for Reliable CI Tests

- Mock all LLM calls in unit tests. Never depend on an API key for unit tests to pass.

- Use

asyncio_mode = "auto"so you don't need@pytest.mark.asyncioon every test (pytest-asyncio 0.23+). - Separate unit and integration tests with pytest markers. Run unit tests on every push, integration tests on PRs.

- Pin your

agentfieldversion inpyproject.tomlto avoid surprise breakage. - Pin environment variables like

AGENTFIELD_LOG_LEVELin CI to control verbosity.

TypeScript Testing

AgentField TypeScript agents receive a ReasonerContext in their handler. The context provides ctx.ai(), ctx.call(), ctx.memory, and ctx.note(). Test them with Jest or Vitest by mocking the context object.

Mocking ctx.ai()

// tests/classifier.test.ts

import { describe, it, expect, vi } from 'vitest';

// Create a mock context matching the ReasonerContext shape

function mockContext(input: Record<string, any>) {

return {

input,

executionId: 'test-exec-1',

ai: vi.fn(),

call: vi.fn(),

memory: {

get: vi.fn(),

set: vi.fn(),

delete: vi.fn(),

},

note: vi.fn(),

discover: vi.fn(),

};

}

describe('classifier reasoner', () => {

it('returns a valid classification', async () => {

const ctx = mockContext({ text: 'How to configure Kubernetes pods' });

// Mock what the LLM would return

ctx.ai.mockResolvedValue({

category: 'technical',

confidence: 0.95,

});

// Import and call the handler directly

const { classifyHandler } = await import('../src/agents/classifier');

const result = await classifyHandler(ctx as any);

expect(result.category).toBe('technical');

expect(result.confidence).toBeGreaterThanOrEqual(0.9);

expect(ctx.ai).toHaveBeenCalledOnce();

});

it('handles low confidence with fallback', async () => {

const ctx = mockContext({ text: 'ambiguous input' });

ctx.ai.mockResolvedValue({ category: 'unknown', confidence: 0.3 });

ctx.call.mockResolvedValue({ category: 'billing', confidence: 0.85 });

const { classifyHandler } = await import('../src/agents/classifier');

const result = await classifyHandler(ctx as any);

// Should have escalated to another agent

expect(ctx.call).toHaveBeenCalledWith('classifier-v2.classify', expect.any(Object));

expect(result.category).toBe('billing');

});

});Testing Reasoner Registration

// tests/agent.test.ts

import { describe, it, expect } from 'vitest';

import { Agent } from '@agentfield/sdk';

describe('agent setup', () => {

it('registers reasoners via fluent API', () => {

const agent = new Agent({ nodeId: 'test', version: '1.0.0' });

// Register handlers (imported from your agent code)

agent.reasoner('classify', classifyHandler);

agent.reasoner('summarize', summarizeHandler);

// Verify registration via the internal registry

expect(agent.reasoners.get('classify')).toBeDefined();

expect(agent.reasoners.get('summarize')).toBeDefined();

});

});TypeScript CI Configuration

// vitest.config.ts

import { defineConfig } from 'vitest/config';

export default defineConfig({

test: {

globals: true,

environment: 'node',

coverage: {

provider: 'v8',

thresholds: { lines: 80 },

},

},

});Go Testing

The Go SDK uses standard testing patterns. Use table-driven tests for reasoners and InMemoryBackend for memory tests.

Table-Driven Tests for Reasoners

// agents/classifier_test.go

package agents

import (

"context"

"testing"

)

func TestClassifyReasoner(t *testing.T) {

tests := []struct {

name string

input map[string]any

wantCat string

wantErr bool

}{

{

name: "technical question",

input: map[string]any{"text": "How to configure Kubernetes pods"},

wantCat: "technical",

},

{

name: "billing question",

input: map[string]any{"text": "Why was I charged twice?"},

wantCat: "billing",

},

{

name: "empty input",

input: map[string]any{"text": ""},

wantErr: true,

},

}

for _, tt := range tests {

t.Run(tt.name, func(t *testing.T) {

ctx := context.Background()

result, err := classifyHandler(ctx, tt.input)

if tt.wantErr {

if err == nil {

t.Fatal("expected error, got nil")

}

return

}

if err != nil {

t.Fatalf("unexpected error: %v", err)

}

got := result.(map[string]any)["category"].(string)

if got != tt.wantCat {

t.Errorf("category = %q, want %q", got, tt.wantCat)

}

})

}

}InMemoryBackend for Memory Tests

// agents/memory_test.go

package agents

import (

"context"

"testing"

"github.com/Agent-Field/agentfield/sdk/go/agent"

)

func TestMemoryRoundtrip(t *testing.T) {

mem := agent.NewMemory(agent.NewInMemoryBackend())

ctx := context.Background()

// Set and get

if err := mem.Set(ctx, "key1", "value1"); err != nil {

t.Fatalf("Set failed: %v", err)

}

val, err := mem.Get(ctx, "key1")

if err != nil {

t.Fatalf("Get failed: %v", err)

}

if val != "value1" {

t.Errorf("Get = %v, want %q", val, "value1")

}

// Typed retrieval (GetTyped is on ScopedMemory)

type Prefs struct {

Tone string `json:"tone"`

}

scoped := mem.SessionScope()

scoped.Set(ctx, "prefs", map[string]any{"tone": "concise"})

var prefs Prefs

if err := scoped.GetTyped(ctx, "prefs", &prefs); err != nil {

t.Fatalf("GetTyped failed: %v", err)

}

if prefs.Tone != "concise" {

t.Errorf("Tone = %q, want %q", prefs.Tone, "concise")

}

}

func TestMemoryScopeIsolation(t *testing.T) {

mem := agent.NewMemory(agent.NewInMemoryBackend())

ctx := context.Background()

// Write to different scopes

mem.GlobalScope().Set(ctx, "key", "global_value")

mem.SessionScope().Set(ctx, "key", "session_value")

// Each scope returns its own value

gVal, _ := mem.GlobalScope().Get(ctx, "key")

sVal, _ := mem.SessionScope().Get(ctx, "key")

if gVal != "global_value" {

t.Errorf("global = %v, want %q", gVal, "global_value")

}

if sVal != "session_value" {

t.Errorf("session = %v, want %q", sVal, "session_value")

}

}Mocking AI Calls in Go

// agents/ai_test.go

package agents

import (

"context"

"testing"

"github.com/Agent-Field/agentfield/sdk/go/agent"

"github.com/Agent-Field/agentfield/sdk/go/ai"

)

// MockAIClient implements a test double for AI calls

type MockAIClient struct {

Responses []string

callIdx int

}

func (m *MockAIClient) Complete(ctx context.Context, prompt string, opts ...ai.Option) (*ai.Response, error) {

resp := m.Responses[m.callIdx]

m.callIdx++

return &ai.Response{

Choices: []ai.Choice{{

Message: ai.Message{

Content: []ai.ContentPart{{Type: "text", Text: resp}},

},

}},

}, nil

}

func TestReasonerWithMockedAI(t *testing.T) {

mock := &MockAIClient{

Responses: []string{

`{"category": "technical", "confidence": 0.95}`,

},

}

// Inject mock into your handler or agent

// Pattern: accept an AI interface in your handler for testability

result, err := classifyWithAI(context.Background(), mock, map[string]any{

"text": "Kubernetes question",

})

if err != nil {

t.Fatal(err)

}

got := result.(map[string]any)["category"]

if got != "technical" {

t.Errorf("category = %v, want technical", got)

}

}Go CI Configuration

# .github/workflows/test.yml

name: Agent Tests (Go)

on: [push, pull_request]

jobs:

test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-go@v5

with:

go-version: '1.22'

- name: Run tests

run: go test ./... -v -race -coverprofile=coverage.out

- name: Check coverage

run: |

go tool cover -func=coverage.out

COVERAGE=$(go tool cover -func=coverage.out | grep total | awk '{print $3}' | sed 's/%//')

if (( $(echo "$COVERAGE < 80" | bc -l) )); then

echo "Coverage ${COVERAGE}% is below 80%"

exit 1

fi